8 June 2012

I'm freezing this blog as read-only, new blog @ ofoghlu.net/blog

As of today I'm freezing this blog as read-only:

http://www.ofoghlu.net/log (Old blog - frozen)

I'll keep all the generated files, with image and other links intact, as I migrate to a new server.

I'll then use a completely new paradigm for maintaining my new blog, that I hope will start to be a bit more regular again, and contain more code snippets. The new tools will make this easier for me:

http://www.ofoghlu.net/blog (new blog - active)

This will use Octopress and Jekyll to manage content. See the first post in the new blog for details.

Migration is in progress, DNS lag means it will take a day to propagate so it points to the new server where the new blog is (I've manually copied the initial sync of the new blog to the old server so the link works in the overlap period).

8 June 2011

World IPv6 Day today

Today is World IPv6 Day, organised by the Internet Society (ISOC). Internet Society - World IPv6 Day.

Read the "About IPv6" section of the Irish IPv6 Task Force website if this means nothing to you :-)

Many people are experimenting with turning on IPv6 for the first time, and/or turning off IPv4 to see if the IPv6 infrastructure can cope.

So today is a good day to test your own machine to see how well it can cope with the next generation internet protocol, IPv6: http://www.testipv6.com.

In Ireland there is a good IPv6 readiness site, http://www.ipv6ready.ie, sponsored by the INEX, the Dublin Internet Neutral Exchange.

The Irish IPv6 Task Force has also a website for the day, http://www.ipv6day.ie, the site includes a monitoring tooolset that monitors IPv6 readiness for high profile public and private sector Irish internet sites.

23 September 2004

Web 2.0 Conference (O'Reilly)

This looks like a good conference: John Battelle's Searchblog: Web 2.0 Draws Near

21 September 2004

CircleID's Channels

The list of CircleID's channels map out the key issues facing the Internet today. The articles in these channels can be accessed via the web portal or via RSS feeds.

CircleID ChannelsAs a crucial part of Internet's core functionality, the global directory structure, better know as the Domain Name System, has been a subject matter filled with technical and political challenges that have grown (and continue to grow) into numerous specific branches. CircleID channels identify key Internet related 'tension points' that members are invited to discuss, write about, comment on, analyze, offer solutions for, criticize, and gain value from.

Internet Governance

Since the creation of the Internet Corporation for Assigned Names and Numbers (ICANN), the regulation of the Internet has become a heated political theme. Internet users have criticized every aspect of governing bodies from questioning the reasons for the existence of such institutes to the nature of the policies put in place and the methods used to achieve them. Others defend the existence of centralized governing organizations and the involvement of government. How do we reach consensus in a borderless geography and open frontier?Empowering DNS

Domain Name System (DNS) is a global directory designed to map names to Internet Protocol addresses and vice versa. DNS contains billions of records, answers billions of queries, and accepts millions of updates from millions of users on an average day. Security and robustness of DNS determines the stability of the Internet and its continuous enhancement will remain a critical factor.Top-Level Domains

Top Level Domains (TLDs) divide the Internet namespace into sectors and geographical regions. Management and use of existing TLDs, as well as the introduction of new generic Top Level Domains are matters that are still evolving and in need of good planning and sound decisions.Registrars

Much like other Internet ventures, the business of buying and selling domain names is passed the so called gold rush and the bubble period and is now moving towards maturity and viability. The initially simple domain name registration process, handled by one organization, is now a global industry with fierce competition and intense price wars. It is filled with over one 100 accredited registrars, thousands and thousand of resellers, is facing controversies over a growing second-hand market and gaining many other specialty services. The naming business is indeed significant business!Legal Issues

Today domain name disputes are daily occurrences that involve domain name thefts, Uniform Domain Name Dispute Resolution Policies (UDRP), cybersquatting, typosquatting, trademark protections, and more. They are issues that have caused regional and international concerns. Others have challenged the very laws that dictate the decisions made in courts. Is it time to call for yet further legal enforcements or time to question the law itself?Addressing Spam

While spam continues to be a major issue for all individuals and organizations on the Internet, increasing levels of effort are made to fight this problem from every possible angle -- technically and legally. Some however argue that the best way to fight spam is through Internets very core infrastructure. In other words, we need to have closer look at the current state of Internet Protocol addressing and the domain name system.Privacy Matters

When it comes to the Internet, the definition of Privacy tends to be an ambiguous concept that has been difficult to reach consensus on. Issues related to the collection, maintenance, use, disclosure, accuracy and processing of private information are surrounded with numerous types of debates. What is the true cost of privacy to individuals, society and to the business world? Regardless of what is decided today and tomorrow one thing is for certain; privacy matters!IP Address & Beyond

Even the best Internet visionaries in the early 1980s could not imagine the dilemma of scale that the Internet would come to face. With realistic projections of many millions of interconnected networks in the not too distant future, the Internet faces the dilemma of choosing between limiting the rate of growth and ultimate size of the Internet, or disrupting the network by changing to new techniques or technologies. Whether it is still too early or not, the shift has begun from the current Internet Protocol (IPv4) to IPv6, the next generation Internet protocol. Consequently, where are we today with IPv4? What does tomorrow hold with IPv6? And how do we deal with the challenges in the road in between?ENUM Convergence

The first and most notable convergence protocol developed to make telephone numbers recognizable by the Domain Name System (DNS). ENUM or Electronic Number Mapping offers the potential for people to be contacted through multiple media channels such as email, fax and mobile phones. While many see ENUM convergence as a significant progress, others have raised questions concerning the types of services to be offered, privacy and security issues. So what are the future implications? Welcome to the technological, commercial, and regulatory world of ENUM convergence.Internationalized DN

Today, many efforts are underway in the Internet community to make domain names available in character sets other than ASCII. As the Internet has spread to non-English speaking people around the globe, it has become increasingly apparent that forcing the world to use domain names written only in a subset of the Latin alphabet is not ideal. Internationalized Domain Names (IDN) is considered a global trend and is likely to be adopted by a large number of websites.Exploring Frontlines

To date, millions of domain names have been registered around the world. A high percentage of domain names registered remain unused and many others serve the purpose of protecting corporate trademarks. There are also a growing number of domain names that are being used in creative ways that leverage the true power of the Internet. What is really going on in the frontlines of the cyberspace?

Can TCP/IP Survive?

This is an article coming out of the 'Internet Mark 2 Project': Can TCP/IP Survive?

The following article is an excerpt from the recently released Internet Analysis Report 2004 - Protocols and Governance. Full details of the argument for protocol reform can be found at 'Internet Mark 2 Project' website, where a copy of the Executive Summary can be downloaded free of charge. The report also comments on the response of governance bodies to these issues........

Assessment

TCP -- if not TCP/IP -- needs to be replaced, probably within a five to ten year time frame. The major issue to overcome is the migration issues (see below)

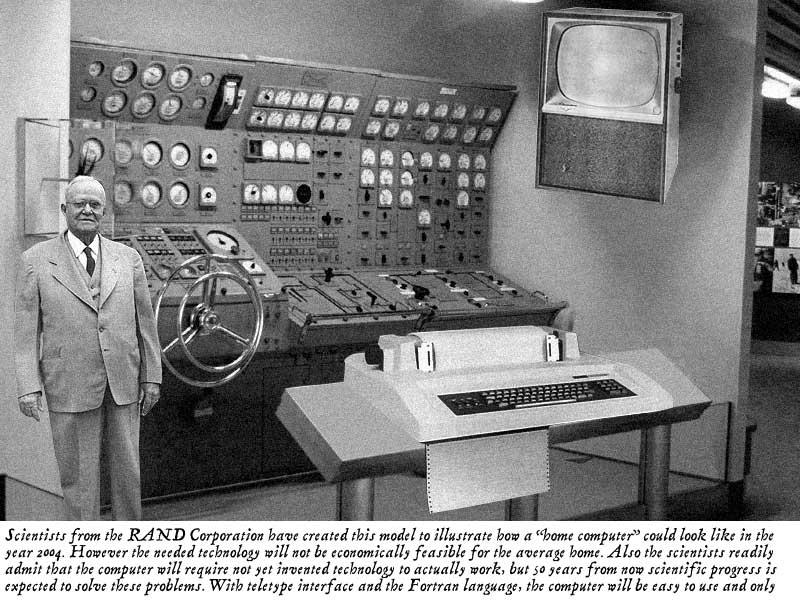

1954's Home of the Future

In 1954 RAND predicted that this would be the Home Computer of 2004, and would be easy to use and programmed in Fortran! Ummm.

Thanks to: Metafilter.

UPDATE 2004-09-29 Okay it looks like this was a hoax; still pretty funny though RAND Hoax: "The picture is actually an entry submitted to an image modification competition, taken from an original photo of a submarine maneuvering room console found on U.S. Navy web site, converted to grayscale, and modified to replace a modern display panel and TV screen with pictures of a decades-old teletype/printer and television (as well as to add the gray-suited man to the left-hand side of the photo)"

Tim Berners Lee (Interviews on Internet.com)

I've been reading John Battelle's searchblog now for about a month and it's definitely staying on my blogroll. This recent posting alterted me to an interesting interview: John Battelle's Searchblog: Tim Berners Lee Interviewed

It is now nearly 15 years since Tim and his colleagues in CERN developed initial prototypes of what we know know as the Web. I remember well the first time I heard of it at the Network Services Conference, October 1992 in Pisa, Italy (the call for participation is still on-line: NSC92). This was for European academic networking community including a nice mixture of librarians and IT folks. I was blown away by two talks: John S. Quarterman's talk on Internet growth (which was pretty impressive even prior to the Web), and Jean-Francois Groff's talk on "World-Wide Web: global hypertext coming true" that was written by Tim, Jean-Francois and another of their colleagues in CERN, Robert Cailliau. Jean-Francois described the clients that were available or planned: dumb, PC, Mac, X-Windows and NeXt Cube. We could try it out a text-based client by telnetting to info.cern.ch (128.141.201.74)! It wasn't long after this I got into Linux (SLS and then Slackware) and deployed a CERN httpd server in University College Galway, Ireland where I worked at the time. Later I deployed the UCG official webserver.

As one would expect, the interview with Tim focsues on the emerging semantic web, his vision of where the Web is going. Interestingly he is also very excited about the mobile web, the area where I am now working with colleagues in the Telecommunications Software & Systems Group.

I still beleive that good research in communications software requires active collaboration and co-oeration between different academic disciplies. For the Web it was librarians/archivists and computer scientists. Now it is these plus telecommunications engineers and many other disciplines. The challenge is to learn from our experiences in all these domains and aggregate knowledge to produce really usfeul systems based on simple principles. It can be done, just look at the Web!

For more infromation see the W3C: A Little History of the World Wide Web and CERN: What are CERN's greatest achievements?.

mLearning (Mobile Learning) in Korea

Well, I had predicted that it would happen: mobile devices being used to enhance the learning environment, in this case with the introduction of a Personal Multimedian Player (PMP). As usual these days in the mobile world, I suppose, the trend is being led up by Korea: Smart Mobs: Mobile learning that links to a Korea Times report stating that students can: "use personal gadgets to study instead of textbooks on the bus or subway." I wonder if they're IPv6 devices, it sounds from the report that they are more like MP3 players with video that are not always on-line. The same device seems to be marketed as an iRiver PMP-100 outside Korea (that's the picture).

EMusic: Lesser known music distribution

A site called EMusic tries to be an independent distribution channel for music The New York Times > Technology > A Music Download Site for Artists Less Known

17 September 2004

RSSify a directory

Andrew Grumet has written a tool to turn a directory into an RSS feed so that subscribers can see and download new files as they appear Andrew Grumet's Weblog: Version 0.3 of dir2rss

16 September 2004

Bellheads and Netheads

A recent Jon Udell article alerted me for the first time to the terms "Bellheads" and "Netheads" to refer, respectively, to telecoms people and computer network people. Jon refers back to an old posting of his own that quotes this report: Netheads versus Bellheads

Research into Emerging Policy Issues in the Development and Deployment of Internet Protocols Final Report For the Federal Department of Industry (Contract Number U4525-9-0038) T.M.Denton Consultants, Ottawa with Fran輟is M駭ard and David Isenberg. This report is no no longer on-line (but is archived PDF on archive.org). The terms live on live on the Internet in this set of slides/pages Netheads versus Bellheads.

The research centre I work in, the TSSG, is all about the convergence of these technologies, particulary in the mobile internet (2.5G, 3G). We try and have a good mix of Bellheads and Netheads!

Nokia and NEC test IMS (mobile IP)

Two industry heavyweights have completed interoperability tests of a new technology designed to drive the convergence of voice, data, and video services over wireless and fixed infrastructure based on the Internet Protocol.

Perl for XML parsing

A useful up-to-date summary of XML.com: Perl Parser Performance by Petr Cimprich on O'Reilly's http://www.xml.com.

15 September 2004

Perl for .NET

The afternoon started of with a session on Perl for .NET Environment by Jonathan Stowe. I assume Jonthan will update his computing links page with details of this talk in time :-)

Notable in the audience was a strong presence of ActiveState dudes, well known for their excellent support of Perl (and other dynamic languages like Python and Tcl) for Windows platforms, and sell excellent tools, and give stuff away as open source as well. I'm sure this topic is key for them.

There was a brief discussion of reuse, and the obvious fact the the Comprehensive Perl Archive Network (CPAN) is the word's largest open library of reusuable code was mentioned. The stats today are: online since 1995-10-26; 2507 MB 260 mirrors; 3863 authors 6893 modules.

Jonathan presented an overview of .NET and discussion of why interoperability was a priority for the Perl community (and indeed for all software). I think the audience was receptive, after all Perl has always defined itself as a glue language linking other software components in useful ways to improve funtionality.

His basic structure was to say that there are three ways to interoperate:

- Exchanging XML Documents (datasets, xml serialisation, configuration)

- HTTP Remoting (serialisable objects, marshalbyref objects)

- Web Services

One of the easiest ways for Perl to interoperate is to have Perl serialise an object into an XML structure (using XML::Simple) and use this to exchange data with other .NET (or indeed any web service). However, there are limitations with this approach.

Jonathan did mention that a problem people have is assuming that they can call remote constructors and then they make assumptions about what they can access remotely, whereas all this actually depends on the implementation of that remote "object". Personally, I like to think of web services as not being at all like CORBA (remote object references) but as being essentially a message passing mechanism without any assumption that you ever comunicate with the same remote object. If you never think of it as communicating with a remote object, then you are less likely to make these conceptual errors.

YAPC::Europe (Belfast)

I am attending my first Perl conference: YAPC::Europe in Belfast (YAPC means Yet Another Perl Conference). This is quite fun for me as I have had a long interst in Perl (both for UNIX/Linux system administration, and for web programming) but haven't had as much time to spend on it recently. I use lots of Perl (like the MovableType scripts that power this weblog) but do not write much code at the moment.

The keynote address was by Allison Randal of The Perl Foundation that was a good update on Perl (Perl5 and Perl6 release news), Parrot (the new Virtual Machine for non strongly-typed languages like Perl) and Ponie (bridging Perl5 and Perl6).

There was an excellent talk on HTTP:Proxy by Philippe 'Book' Bruhat. It looks to me like this could be deployed as an IPv4 or an IPv6 proxy (this dependency is on LWP rather than Philippe's module). He uses it himself on his own machine to do the usual advertisemnt stripping, but can change character sets, and customise the appearance of any external website. This means one can view all web-based material based on one's personal preferences. His examples forcused on the Dragon Go Server (i.e. on-line playing of the Japenese/Chinese game of Go) which he customised to supress the text message box (as it made the submit button too far down the page) unless he explictly requested it by clicking on the remaining link. It can also be used to log personal browsing habits for potential data mining later. The system is based on small filter scripts (mainly using regular expression matches and substitions) that looks easy enough to master. This is a very flexible tool for web-based material, or as he says himself "All of your web pages belong to us!"

14 September 2004

Linux standards base Version 2.0 has C++ support

It is good to see development of the LSB to avoid fragmentation of the Linux development platform: InfoWorld: Linux standards base Version 2.0 has C support: September 13, 2004: By Ed Scannell : APPLICATION_DEVELOPMENT : APPLICATIONS : NETWORKING : WEB_SERVICES This could help make the Linux platform a viable target for developers, and ensure that by following a single standard that deployment is possible on all compliant Linux distributions (most have signed up).

12 September 2004

Valid XHTML/HTML

I just re-edited my homepage, TSSG WIT: Mícheál Ó Foghlú's Home Page, to make it HTML 4.01 Transitional valid. Not too much trouble. However to make this weblog itself (i.e. the index pages and entry pages) XHTML 1.0 Transitional valid is much trickier as there are often "&" symbols buried in URLs that I just pasted in when I posted a link (and the odd one I typed myself inadvertantly). I've left it on the long finger for now.

6 September 2004

Mod_pubsub

An interesting project on enabling easyly deployable Publish & Subscribe mechanisms using client-side Javascript in a browser, serv-erside mod_perl on Apache, and HTTP as a transport mod_pubsub Project FAQ

Old Internet Services Predictions

I like this list of Trend Wars from IEEE Concurrency Vol. 8, No. 1, January - March 2000

It clearly shows how wireless access technologies were poised to be the next big thing in 2000. You could almost say exactly the same today, but perhaps be less cagey about 3G as it has firmed up quite a bit.

The article is based on interviews with a number of experts:

Eric A. Brewer is an assistant professor at the University of California, Berkeley in the Department of Computer Science. He cofounded Inktomi Corporation in 1996, and is currently its chief scientist. He received his BS in computer science from UC Berkeley, and his MS and PhD from MIT. He is a Sloan Fellow, an Okawa Fellow, and a member of Technology Review's TR100, Computer Reseller News' Top 25 Executives, Forbes "E-gang," and the Global Economic Forum's Global Leaders for Tomorrow.

Fred Douglis is the head of the Distributed Systems Research Department at AT&T Labs- Research. He has taught distributed computing at Princeton University and the Vrije Universiteit, Amsterdam. He has published several papers in the area of World Wide Web performance and is responsible for the AT&T Internet Difference Engine, a tool for tracking and viewing changes to resources on the Web. He chaired the 1998 Usenix Technical Conference and 1999 Usenix Symposium on Internetworking Technologies and Systems, and is program cochair of the 2001 Symposium on Applications and the Internet (SAINT). He has a PhD in computer science from the University of California, Berkeley.

Peter Druschel is an assistant professor of computer science at Rice University in Houston, Texas. He received his MS and PhD in computer science from the University of Arizona. His research interests include operating systems, computer networking, distributed systems, and the Internet. He currently heads the ScalaServer project on scalable cluster-based network servers.

Gary Herman is director of the Internet and Mobile Systems Laboratory in Hewlett-Packard Laboratories, Palo Alto, CA, and Bristol, UK. His organization is responsible for HP's research on technologies required for deploying and operating the service delivery infrastructure for the future Internet, including the opportunities created by broadband and wireless connectivity. Prior to joining HP in 1994, he held positions at Bellcore, Bell Laboratories, and the Duke University Medical Center. He received his PhD in electrical engineering from Duke University.

Franklin Reynolds is a senior research engineer at Nokia Networks and works at the Nokia Research Center in Boston. His interests include ad hoc self-organizing distributed systems, operating systems, and communications protocols. Over the years he has been involved in the development of various operating systems ranging from small, real-time kernels to fault-tolerant, distributed systems.

Munindar Singh is an assistant professor in computer science at North Carolina State University. His research interests are in multiagent systems and their applications in e-commerce and personal technologies. Singh received a BTech from the Indian Institute of Technology, Delhi, and a PhD from the University of Texas, Austin. His book, Multiagent Systems, was published by Springer-Verlag in 1994. Singh is the editor-in-chief of IEEE Internet Computing.

The interview is based on the following questions:

Internet Technology Questions

1. In retrospect, what were the decisive turning points for Internet and WWW technology to become ubiquitous and pervasive?

2. What are the next likely disruptive technologies in Internet space that might make new marks in the way we live and work?

3. What are the most important technologies that will determine the future Internet's speed and direction?

4. Where do you see the roles of industry, startups, research labs, universities, "open source" companies, and standard organizations in shaping the future Internet?

5. What will be the major application areas dominating the Web?

6. What is the most controversial and unpredictable technology in the Internet space?

Why not take this prediction test yourself today in September 2004?

19 August 2004

RSS Weather

Thanks to Brian Delahunty in the TSSG for pointing out that you can now get RSS feeds for weather stations. The nearest one to us is Cork Airport: RSS 2.0 RSS Feed for weather at Cork Airport, Ireland.

You can Choose your nearest weather station.

14 August 2004

REST Web Services: Best Practices and Guidelines

An interesting O'Reilly article: XML.com: Implementing REST Web Services: Best Practices and Guidelines

Implementing REST Web Services: Best Practices and Guidelines by Hao He August 11, 2004 Despite the lack of vendor support, Representational State Transfer (REST) web services have won the hearts of many working developers. For example, Amazon's web services have both SOAP and REST interfaces, and 85% of the usage is on the REST interface. Compared with other styles of web services, REST is easy to implement and has many highly desirable architectural properties: scalability, performance, security, reliability, and extensibility. Those characteristics fit nicely with the modern business environment, which commands technical solutions just as adoptive and agile as the business itself.A few short years ago, REST had a much lower profile than XML-RPC, which was much in fashion. Now XML-RPC seems to have less mindshare. People have made significant efforts to RESTize SOAP and WSDL. The question is no longer whether to REST, but instead it's become how to be the best REST?

The purpose of this article is to summarize some best practices and guidelines for implementing RESTful web services. I also propose a number of informal standards in the hope that REST implementations can become more consistent and interoperable.

The following notations are used in this article:

BP: best practice

G: general guideline

PS: proposed informal standard

TIP: implementation tip

AR: arguably RESTful -- may not be RESTful in the strict senseReprising REST

Let's briefly reiterate the REST web services architecture. REST web services architecture conforms to the W3C's Web Architecture, and leverages the architectural principles of the Web, building its strength on the proven infrastructure of the Web. It utilizes the semantics of HTTP whenever possible and most of the principles, constraints, and best practices published by the TAG also apply.

The REST web services architecture is related to the Service Oriented Architecture. This limits the interface to HTTP with the four well-defined verbs: GET, POST, PUT, and DELETE. REST web services also tend to use XML as the main messaging format.

[G] Implementing REST correctly requires a resource-oriented view of the world instead of the object-oriented views many developers are familiar with.

Resource

One of the most important concepts of web architecture is a "resource." A resource is an abstract thing identified by a URI. A REST service is a resource. A service provider is an implementation of a service.

URI Opacity [BP]

The creator of a URI decides the encoding of the URI, and users should not derive metadata from the URI itself. URI opacity only applies to the path of a URI. The query string and fragment have special meaning that can be understood by users. There must be a shared vocabulary between a service and its consumers.

Query String Extensibility [BP, AR]

A service provider should ignore any query parameters it does not understand during processing. If it needs to consume other services, it should pass all ignored parameters along. This practice allows new functionality to be added without breaking existing services.

[TIP] XML Schema provides a good framework for defining simple types, which can be used for validating query parameters.

Deliver Correct Resource Representation [G]

A resource may have more than one representation. There are four frequently used ways of delivering the correct resource representation to consumers:

Server-driven negotiation. The service provider determines the right representation from prior knowledge of its clients or uses the information provided in HTTP headers like Accept, Accept-Charset, Accept-Encoding, Accept-Language, and User-Agent. The drawback of this approach is that the server may not have the best knowledge about what a client really wants.

Client-driven negotiation. A client initiates a request to a server. The server returns a list of available of representations. The client then selects the representation it wants and sends a second request to the server. The drawback is that a client needs to send two requests.

Proxy-driven negotiation. A client initiates a request to a server through a proxy. The proxy passes the request to the server and obtains a list of representations. The proxy selects one representation according to preferences set by the client and returns the representation back to the client.

URI-specified representation. A client specifies the representation it wants in the URI query string.

Server-Driven Negotiation [BP]

When delivering a representation to its client, a server MUST check the following HTTP headers: Accept, Accept-Charset, Accept-Encoding, Accept-Language, and User-Agent to ensure the representation it sends satisfies the user agent's capability.

When consuming a service, a client should set the value of the following HTTP headers: Accept, Accept-Charset, Accept-Encoding, Accept-Language, and User-Agent. It should be specific about the type of representation it wants and avoid "*/*", unless the intention is to retrieve a list of all possible representations.

A server may determine the type of representation to send from the profile information of the client.

URI-Specified Representation [PS, AR]

A client can specify the representation using the following query string:mimeType={mime-type}

A REST server should support this query.Different Views of a Resource [PS, AR]

A resource may have different views, even if there is only one representation available. For example, a resource has an XML representation but different clients may only see different portion of the same XML. Another common example is that a client might want to obtain metadata of the current representation.To obtain a different view, a client can set a "view" parameter in the URI query string. For example:

GET http://www.example.com/abc?view=meta

where the value of the "view" parameter determines the actual view. Although the value of "view" is application specific in most cases, this guideline reserves the following words:"meta," for obtaining the metadata view of the resource or representation.

"status," for obtaining the status of a request/transaction resource.Service

A service represents a specialized business function. A service is safe if it does not incur any obligations from its invoking client, even if this service may cause a change of state on the server side. A service is obligated if the client is held responsible for the change of states on server side.Safe Service

A safe service should be invoked by the GET method of HTTP. Parameters needed to invoke the service can be embedded in the query string of a URI. The main purpose of a safe service is to obtain a representation of a resource.Service Provider Responsibility [BP]

If there is more than one representation available for a resource, the service should negotiate with the client as discussed above. When returning a representation, a service provider should set the HTTP headers that relate to caching policies for better performance.A safe service is by its nature idempotent. A service provider should not break this constraint. Clients should expect to receive consistent representations.

Obligated Services [BP]

Obligated services should be implemented using POST. A request to an obligated service should be described by some kind of XML instance, which should be constrained by a schema. The schema should be written in W3C XML Schema or Relax NG. An obligated service should be made idempotent so that if a client is unsure about the state of its request, it can send it again. This allows low-cost error recovery. An obligated service usually has the simple semantic of "process this" and has two potential impacts: either the creation of new resources or the creation of a new representation of a resource.Asynchronous Services

One often hears the criticism that HTTP is synchronous, while many services need to be asynchronous. It is actually quite easy to implement an asynchronous REST service. An asynchronous service needs to perform the following:Return a receipt immediately upon receiving a request.

Validate the request.

If the request if valid, the service must act on the request as soon as possible. It must report an error if the service cannot process the request after a period of time defined in the service contract.

Request Receipt

An example receipt is shown below:

A receipt is a confirmation that the server has received a request from a client and promises to act on the request as soon as possible. The receipt element should include a received attribute, the value of which is the time the server received the request in WXS dateTime type format. The requestUri attribute is optional. A service may optionally create a request resource identified by the requestUri. The request resource has a representation, which is equivalent to the request content the server receives. A client may use this URI to inspect the actual request content as received by the server. Both client and server may use this URI for future reference.

However, this is application-specific. A request may initiate more than one transaction. Each transaction element must have a URI attribute which identifies this transaction. A server should also create a transaction resource identified by the URI value. The transaction element must have a status attribute whose value is a URI pointing to a status resource. The status resource must have an XML representation, which indicates the status of the transaction.

Transaction

A transaction represents an atomic unit of work done by a server. The goal of a transaction is to complete the work successfully or return to the original state if an error occurs. For example, a transaction in a purchase order service should either place the order successfully or not place the order at all, in which case the client incurs no obligation.Status URI [BP, AR]

The status resource can be seen as a different view of its associated transaction resource. The status URI should only differ in the query string with an additional status parameter. For example:Transaction URI: http://www.example.com/xyz2343 Transaction Status URI: http://www.example.com/xyz2343?view=status

Transaction Lifecycle [G]

A transaction request submitted to a service will experience the following lifecycle as defined in Web Service Management: Service Life Cycle:Start -- the transaction is created. This is triggered by the arrival of a request.

Received -- the transaction has been received. This status is reached when a request is persisted and the server is committed to fulfill the request.

Processing -- the transaction is being processed, that is, the server has committed resources to process the request.

Processed -- processing is successfully finished. This status is reached when all processing has completed without any errors.

Failed -- processing is terminated due to errors. The error is usually caused by invalid submission. A client may rectify its submission and resubmit. If the error is caused by system faults, logging messages should be included. An error can also be caused by internal server malfunction.

Final -- the request and its associated resources may be removed from the server. An implementation may choose not to remove those resources. This state is triggered when all results are persisted correctly.

Note that it is implementation-dependent as to what operations must be performed on the request itself in order to transition it from one status to another. The state diagram of a request (taken from Web Service Management: Service Life Cycle) is shown below:

As an example of the status XML, when a request is just received:

The XML contains a state attribute, which indicates the current state of the request. Other possible values of the state attribute are processing, processed, and failed.

When a request is processed, the status XML is (non-normative):

This time, a result element is included and it points to a URL where the client can GET request results.

In case a request fails, the status XML is (non-normative):

A bad request.

line 3234

A client application can display the message enclosed within the message tag. It should ignore all other information. If a client believes that the error was not caused by its fault, this XML may serve as a proof. All other information is for internal debugging purposes.Request Result [BP]

A request result view should be regarded as a special view of a transaction. One may create a request resource and transaction resources whenever a request is received. The result should use XML markup that is as closely related to the original request markup as possible.Receiving and Sending XML [BP]

When receiving and sending XML, one should follow the principle of "strict out and loose in." When sending XML, one must ensure it is validated against the relevant schema. When receiving an XML document, one should only validate the XML against the smallest set of schema that is really needed. Any software agent must not change XML it does not understand.An Implementation Architecture

The architecture represented above has a pipe-and-filter style, a classical and robust architectural style used as early as in 1944 by the famous physicist, Richard Feynman, to build the first atomic bomb in his computing team. A request is processed by a chain of filters and each filter is responsible for a well-defined unit of work. Those filters are further classified as two distinct groups: front-end and back-end. Front-end filters are responsible to handle common Web service tasks and they must be light weight. Before or at the end of front-end filters, a response is returned to the invoking client.

All front-end filters must be lightweight and must not cause serious resource drain on the host. A common filter is a bouncer filter, which checks the eligibility of the request using some simple techniques:

IP filtering. Only requests from eligible IPs are allowed.

URL mapping. Only certain URL patterns are allowed.

Time-based filtering. A client can only send a certain number of requests per second.

Cookie-based filtering. A client must have a cookie to be able to access this service.

Duplication-detection filter. This filter checks the content of a request and determines whether it has received it before. A simple technique is based on the hash value of the received message. However, a more sophisticated technique involves normalizing the contents using an application-specific algorithm.

A connector, whose purpose is to decouple the time dependency between front-end filters and back-end filters, connects front-end filters and back-end filters. If back-end processing is lightweight, the connector serves mainly as a delegator, which delegates requests to its corresponding back-end processors. If back-end processing is heavy, the connector is normally implemented as a queue.Back-end filters are usually more application specific or heavy. They should not respond directly to requests but create or update resources.

This architecture is known to have many good properties, as observed by Feynman, whose team improved its productivity many times over. Most notably, the filters can be considered as a standard form of computing and new filters can be added or extended from existing ones easily. This architecture has good user-perceived performance because responses are returned as soon as possible once a request becomes fully processed by lightweight filters. This architecture also has good security and stability because security breakage and errors can only propagate a limited number of filters. However, it is important to note that one must not put a heavyweight filter in the front-end or the system may become vulnerable to denial-of-service attacks.

5 August 2004

Jon Udell likes Bloglines too

As usuall Jon Udell captures the moment with this detailed description of why he likes Bloglines | Jon Udell.

Since last fall, I've been recommending Bloglines to first-timers as the fastest and easiest introduction to the subscription side of the blogosphere. Remarkably, this same application also meets the needs of some of the most advanced users. I've now added myself to that list. Hats off to Mark Fletcher for putting all the pieces together in such a masterful way.What goes around comes around. Five years ago, centralized feed aggregators -- my.netscape.com and my.userland.com -- were the only game in town. Fat-client feedreaders only arrived on the scene later. Because of the well-known rich-versus-reach tradeoffs, I never really settled in with one of those. Most of the time I've used the Radio UserLand reader. It is browser-based, and it normally points to localhost, but I've been parking Radio UserLand on a secure server so that I can read the feeds it aggregates for me from anywhere.

Bloglines takes that idea and runs with it. Like the Radio UserLand reader, it supports the all-important (to me) consolidated view of new items. But its two-pane interface also shows me the list of feeds, highlighting those with new entries, so you can switch between a linear of scan of all new items and random access to particular feeds. Once you've read an item it vanishes, but you can recall already-read items like so:

Display items within the last Session1 Hour6 Hours12 Hours24 Hours48 Hours72 HoursWeekMonthAll Items

If a month's worth of some blog's entries produces too much stuff to easily scan, you can switch that blog to a titles-only view. The titles expand to reveal all the content transmitted in the feed for that item.

I haven't gotten around to organizing my feeds into folders, the way other users of Bloglines do, but I've poked around enough to see that Bloglines, like Zope, handles foldering about as well as you can in a Web UI -- which is to say, well enough. With an intelligent local cache it could be really good; more on that later.

Bloglines does two kinds of data mining that are especially noteworthy. First, it counts and reports the number of Bloglines users subscribed to each blog. In the case of Jonathan Schwartz's weblog, for example, there are (as of this moment) 253 subscribers.

Second, Bloglines is currently managing references to items more effectively than the competition. I was curious, for example, to gauge the reaction to the latest salvo in Schwartz's ongoing campaign to turn up the heat on Red Hat. Bloglines reports 10 References. In this case, the comparable query on Feedster yields a comparable result, but on the whole I'm finding Bloglines' assembly of conversations to be more reliable than Feedster's (which, however, is still marked as 'beta'). Meanwhile Technorati, though it casts a much wider net than either, is currently struggling with conversation assembly.

I love how Bloglines weaves everything together to create a dense web of information. For example, the list of subscribers to the Schwartz blog includes: judell - subscribed since July 23, 2004. Click that link and you'll see my Bloglines subscriptions. Which you can export and then -- if you'd like to see the world through my filter -- turn around and import.

Moving my 265 subscriptions into Bloglines wasn't a complete no-brainer. I imported my Radio UserLand-generated OPML file without any trouble, but catching up on unread items -- that is, marking all of each feed's sometimes lengthy history of items as having been read -- was painful. In theory you can do that by clicking once on the top-level folder containing all the feeds, which generates the consolidated view of unread items. In practice, that kept timing out. I finally had to touch a number of the larger feeds, one after another, in order to get everything caught up. A Catch Up All Feeds feature would solve this problem.

[Update: The feature, of course, exists. Thanks to David Ron for pointing this out. The reason I didn't find it: the Mark All Read link is right-aligned at the top of the left pane, and not bound to the other controls found there. Since I have some feeds with very long titles, it's necessary to scroll rightward in the left pane to find the Mark All Read control. Operator error on my part, but I'm sure I'm not the only one.]

Another feature I'd love to see is Move To Next Unread Item -- wired to a link in the HTML UI, or to a keystroke, or ideally both.

Finally, I'd love it if Bloglines cached everything in a local database, not only for offline reading but also to make the UI more responsive and to accelerate queries that reach back into the archive.

Like Gmail, Bloglines is the kind of Web application that surprises you with what it can do, and makes you crave more. Some argue that to satisfy that craving, you'll need to abandon the browser and switch to RIA (rich Internet application) technology -- Flash, Java, Avalon (someday), whatever. Others are concluding that perhaps the 80/20 solution that the browser is today can become a 90/10 or 95/5 solution tomorrow with some incremental changes.

Dare Obasanjo wondered, over the weekend, "What is Google building?" He wrote:

In the past couple of months Google has hired four people who used to work on Internet Explorer in various capacities [especially its XML support] who then moved to BEA; David Bau, Rod Chavez, Gary Burd and most recently Adam Bosworth. A number of my coworkers used to work with these guys since our team, the Microsoft XML team, was once part of the Internet Explorer team. It's been interesting chatting in the hallways with folks contemplating what Google would want to build that requires folks with a background in building XML data access technologies both on the client side, Internet Explorer and on the server, BEA's WebLogic. [Dare Obasanjo]

It seems pretty clear to me. Web applications such as Gmail and Bloglines are already hard to beat. With a touch of alchemy they just might become unstoppable.

Yes, we all agree. I couldn't have put it better myself.

4 August 2004

W3C and OMA to collaborate on specifications to enable mobile services

Standards Bodies to Give the Web Legs By Clint Boulton July 30, 2004

In a move to get more users to access the Web via mobile devices, the World Wide Web Consortium (W3C) and the Open Mobile Alliance (OMA) have inked an agreement to collaborate on specifications.The two standards bodies Thursday said they will share information to guard against creating dueling standards for making it easier for users to access the Internet via Web-enabled phones, cameras or personal digital assistants.

The W3C and OMA, which develops standards for mobile data services, will share technical information and specs to help provide solid, workable standards that benefit developers, product and service providers and users. The groups will hold meetings together to discuss each other's progress, but W3C officials said no timetable has been set for the meetings.

Max Froumentin, spokesman for the W3C's Multimodal and Voice Working Group, whose group writes specs to adapt Web content on mobile gadgets, said the move is an attempt to avoid doing the same work twice -- and differently.

"Now that we have devices that access the Web, there is a potential overlap between standards bodies," Froumentin told internetnews.com.

For example, Froumentin's group works on multimodal communications that allow speech recognition, keyboard, touch screen and a stylus to be used in the same session. This would alleviate the clumsiness of using a keyboard on a mobile smartphone. OMA could conceivably craft service standards that repeat the work of the multimodal group, causing redundancies.

The W3C/OMA pact comes at a time when the demand for mobile applications based on platforms such as Microsoft's .NET or Java is growing despite the relative immaturity of technologies and the lack of standards to facilitate them. W3C and OMA hope to change that by collaborating on common specs that may evolve into standards.

Philipp Hoschka, Interaction Domain Leader at the W3C, said another reason for the pact is a significant uptake in the interest of the W3C's mobile Scalable Vector Graphics (SVG) and Synchronized Multimedia Integration Language (SMIL).

"These are very much driven by the needs of the mobile community," Hoschka told internetnews.com, noting that SVG and SMIL are the basis for Multimedia Messaging Service, a descendant of Short Messaging Service, which the OMA is working on. Hoschka said MMS will allow users to send applications that support slide shows, audio and video from mobile devices.

In other standards news, the Securities and Exchange Commission said it is seeking public comment on alternative methods and the costs and benefits associated with data tagged by Extensible Business Reporting Language (XBRL), an open specificatio n for software that uses XML data tags to describe financial information for businesses.

The SEC said in a statement it will consider an SEC staff proposal to accept voluntary supplemental filings of financial data using XBRL, which would help the agency get a gauge on the types of data tagging currently available in the market.

The agency may propose a rule this fall that would establish the voluntary XBRL-tagged filing program beginning with the 2004 calendar year-end reporting season.

Industry experts love the possibilities of XBRL, which is heartily supported by software giant Microsoft, which produces its financial statements in XBRL.

13 July 2004

W3C Releases Public Working Draft for Full-Text Searching of XML Text and Documents

The W3C has released the Public Working Draft for Full-Text Searching of XML Text and Documents (link to "The Cover Pages" article on the announcement). This draft is entitled XQuery 1.0 and XPath 2.0 Full-Text. To quote "The Cover Pages" article:

As defined by the draft, "full-text queries are performed on text which has been tokenized, i.e., broken into a sequence of words, units of punctuation, and spaces." New full-text search facility is implemented by extending the XQuery and XPath languages to support a new "FTContainsExpr" expression and a new "ft:score" function.Expressions of the type FTSelection are composed of:(1) words or combinations of words that are the search strings to be found as matches; (2) Match options such as case sensitivity or an indication to use stop words; (3) Boolean operators that allow composition of an FTSelection from simpler FTSelections; (4) Positional constraints such as indication of match distance or window.

The new Full-Text Working Draft endeavors to meet search requirements specified in an updated companion draft XQuery 1.0 and XPath 2.0 Full-Text Use Cases. This document provides use cases designed to "illustrate important applications of full-text querying within an XML query language. Each use case exercises a specific functionality relevant to full-text querying. An XML Schema and sample input data are provided; each use case specifies a query applied to the input data, a solution in XQuery, a solution in XPath (when possible), and the expected results."

Full-text query designed as an extension of XQuery and XPath will support several kinds of searches not possible using simple substring matching. It allows precision querying of XML documents containing "highly-structured data (numbers, dates), unstructured data (untagged free-flowing text), and semi-structured data (text with embedded tags).

Language-based query and token-based searches are also supported; for example, find all the news items that contain a word with the same linguistic stem as the English word "mouse" which finds occurrences of both "mouse" and "mice" together with possessive forms.

Tokenization serves as the basis for full-text search in the W3C draft. Words, spaces, and punctuation are distinguished. A "word is defined as any character, n-gram, or sequence of characters returned by a tokenizer as a basic unit to be queried; consecutive words need not be separated by either punctuation or space, and words may overlap; a phrase is a sequence of ordered words which can contain any number of words." This model "enables functions and operators which work with the relative positions of words (e.g., proximity operators). It uniquely identifies sentences and paragraphs in which words appear. Tokenization also enables functions and operators which operate on a part or the root of the word, e.g., wildcards, stemming."

The W3C XQuery and XSL Working Groups invite public comment on the two full-text query drafts.

Putting this within the broader XQuery context:

"The mission of the XML Query Project is to provide flexible query facilities to extract data from real and virtual documents on the World Wide Web, therefore finally providing the needed interaction between the Web world and the database world. Ultimately, collections of XML files will be accessed like databases. The ambitious task of the XML Query (XQuery) Working Group is therefore to develop the first world standard for querying web documents, following the incredibly successful discussion started at the QL'98 event. However, the XML Query (XQuery) project is all-around, and also includes in its efforts not only the standard for querying XML documents, but also the next-generation standards for doing XML selection (XPath2), for doing XML serialization, for doing Full-Text Search, for providing a possible functional XML Data Model, and for providing a standard set of functions and operators for manipulating web data..." [from the XQuery page]

The development of robust searching techniques within XML documents is a crucial underlying technology for many evolving areas of distributed computing and eBusiness/eCommerce. It will be interesting to see if document-centric XML-based approaches mature the same way that relational database approaches have over the past 20 years to become a core compent of nearly all systems. However, trying to capture complex natural language concepts like alternative irregular plurals automatically in search queries may be pushing things further than can be actually be achieved in real world implementations at present.

6 July 2004

Useful Articles on Routing

This is the latest in a set of useful postings (Chapters) describing routing: Elguapo's Guide to Routing - Part 4, OSPF || kuro5hin.org

Chapter 1 was an introduction. Chapter 2 was RIP. Chapter 3 was BGP. Chapter 5 will be the Interior Gateway Routing Protocol (IGRP). Chapter 6 will be the Enhanced Interior Gateway Routing Protocol (EIGRP). Chapter 7 will be the Intermediate System to Intermediate System protocol.

IPv9 - April Fool or Reality?

I saw some recent postings about IPv9 in China that had me very confused as any prior mentions of IPv9 (as I discivered from a Google search) had been April Fool's jokes. However the latest reports China disowns IPv9 hype | The Register do seem to have some reality, but are "true, but probably unimportant" (Gag Helfront's catch phrase in "The Hitch Hiker's Guide to the Galaxy" by Douglas Adams). I'll stick to my interest in IPv6 for now. Here's the text of that article in full:

Evidence is growing that IPv9, hyped up the widely-adopted foundation of a next generation Internet infrastructure in China, is really a marginal project backed by few even in China.Reports from China this week about widespread adoption of the previously unheard of Internet protocol have created bewilderment and something approaching a diplomatic incident in the sysadmin community.

Vint Cerf, SVP of technology strategy at MCI, and one of chief architects of the modern Internet, was bewildered by the reports. In an email sent to senior figures in the Chinese Internet community, he asked: "What could this possibly be about? As far as I know, IANA [Internet Assigned Numbers Authority] has not allocated the IPv9 designation to anyone. IPv9 is not an Internet standard. Could you please explain what is intended here? I am disturbed by the reference to root servers, 'control'. What is the 'ten digit text file' all about? Who is behind the Shanghai Jiuyao Digital Network Company?"

Professor Hualin Qian of the Computer Network Information Center of the Chinese Academy of Sciences described IPv9 as a research project that turned out to have serious practical shortcomings and little support.

"CNIC explains IPv9 is proposed by the director, Mr. Xie Jian-Ping, of the Institute of Chemical Engineering located in Shanghai. Two years ago, Chinese Academy of Sciences (CAS) invited them to introduce their idea about IPv9. According to my understanding, their proposal includes two main aspects: the first one is IPv9, the second one is Digital Domain Names.

"For IPv9, they think that the address space of IPv6 (128bits long) is not enough for future use, they expanded the IP address to 256 bits. I don't think the protocols for IPv9 have major difference from IPv6 except the longer IP address. Almost all the people working on networks in CAS do not agree with their opinion, because there is not any evidence showing that the IPv6 address is not enough and using 256 bits source and destination IP address will increase the overhead of an IP packet. And when communicating with IPv4/IPv6, equipment such as NAT-PT [Network Address Translation] must be installed. This will be the bottle neck for future high capacity interconnection with IPv4 and IPv6 global Internet."

Hualin added that IPv9 is unfamiliar to network experts from Fudan University in Shanghai who "do not know any deployment of IPv9 in Shanghai" contrary to initial reports by China's official news agency, Xinhua.

Tim Chown of Southampton University, and technology adviser to the IPv6 Task Force in the UK, told El Reg: "The consensus now seems to be it is one researcher or group trying to promote a 256-bit adaptation of IPv6, but it doesn't yet seem to have much traction. It is hard to tell how serious it is, or whether it is a complete non-starter in the same way as Jim Fleming's ludicrous IPv8 is. There may well be some sensible ideas behind IPv9, but IPv6 is the system that is standardised and now (very) widely implemented."

I know Tim Chown from various EU IPv6 Cluster meetings and so I'm happy to discount IPv9 for now.

4 July 2004

RDF for the Desktop

I'm glad to see moves to push RDF onto the desktop as metadata for desktop resources: 2004: Metadata for the desktop. This ties in my my recent postings about MS Longhorn's new filesystem and Jon Udell's musings on these matters.

2 July 2004

Linux kernel: Moving closer to Windows?

This article from ADNet shows that there may be more similarities between Windows and Linux kernels than you might think. Linux kernel: Moving closer to Windows? (ZDNet UK News)

Home Wi-Fi hotspots typically not encrypted

Whilst Wi-Fi security is a complex issue, is is worrying that many people use no form of encryption as reported in: Security-Free Wireless Networks (Wired News, 30th May 2004).

"SAN JOSE, California -- With a laptop perched in the passenger seat of his Toyota 4Runner and a special antenna on the roof, Mike Outmesguine ventured off to sniff out wireless networks between Los Angeles and San Francisco. He got a big whiff of insecurity."While his 800-mile drive confirmed that the number of wireless networks is growing explosively, he also found that only a third used basic encryption -- a key security measure. In fact, in nearly 40 percent of the networks not a single change had been made to the gear's wide-open default settings.

...

"During his wardrive, Outmesguine counted 3,600 hot spots, compared with 100 on the same route in 2000. Worldwide, makers of Wi-Fi gear for homes and small offices posted sales of more than $1.3 billion in 2003, a 43 percent jump over 2002, according to Synergy Research Group."

1 July 2004

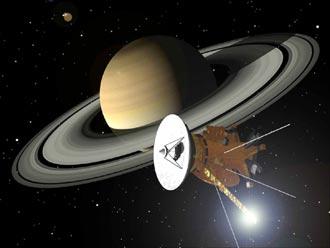

Cassini in Orbit Around Saturn

"The international Cassini-Huygens mission has successfully entered orbit around Saturn. At 9:12 p.m. PDT on Wednesday, flight controllers received confirmation that Cassini had completed the engine burn needed to place the spacecraft into the correct orbit. This begins a four-year study of the giant planet, its majestic rings and 31 known moons. " (from NASA Announcement)

Mono release ( .NET for other platforms)

Novell releases Mono (The Register)

Here's the full text of the Register posting:

"Novell has released the first version of Mono, which brings Microsoft's .NET framework to non-Microsoft platforms. It's available pre-compiled for SuSE and Red Hat distributions of Linux, and for Mac OS X. It's almost exactly three years since it was announced by Miguel de Icaza. Novell acquired Ximian last year."Mono is a run-time and C# compiler, but the team has included just-in-time support an IDE, and intriguingly, both a Visual Basic runtime and a Java VM. For a quick list of what's different from Microsoft's own .NET, consult this list. The biggest omissions in the initial release are lack of COM support - which may affect legacy applications - and no printing.

"The project has been controversial since the start, and remains so. Today the debate is about patents: Microsoft has claims on many core aspects of .NET. (See MS patents .Everything). But simply getting there and providing a level of compatibility is no small achievement. And keeping compatible is a problem Microsoft has with itself. ョ"

The new Mono website has a Mono Downloads section and a Resease Notes Mono 1.0 section as well as a blog posting Mono Release 1.0 Announcement.

Intelligent Buildings

Using Internet to Reduce Electricity Bills describes the use of XML-based collaborative communication between buildings to optimise electricity usage.

12 June 2004

Thoughts on Schooling

I came across Thoughts on Schooling posting on the Gurteen Knowledge-log (RSS feed URL link). This Gurteen is not, as it sounds, a small place in Ireland, but a knowledge management expert's on-line knowledge management system making heavy use of XML-based technologies such as RSS feeds and metadata. The content of this posting is interesting, I have long been a fan of Illich anti-institutional appraoch to education, but so is the actual website architecure and the use of a Moveable Type platform for an integrated knowedlge management system like this.

I am a huge fan of John Holt and you will find much about him on this website and in my knowledge-letters so I was delighted to find this nice little summary of some of John Holt's ideas on education and schooling in Robert Patersons weblog.Just a taste:

I think children learn better when they learn what they want to learn when they want to learn it, and how they want to learn it, learning for their own curiosity and not at somebody else's order.

Reading this, got me to thinking and searching the web some more on the subject of schooling and making the link between three people whose ideas and writings I greatly admire. John Holt of course but also Ivan Illich and Alfie Kohn.Ivan Illich also has some interesting things to say on schooling. This is how chapter 1 of his book Deschooling Society starts:

Many students, especially those who are poor, intuitively know what the schools do for them. They school them to confuse process and substance. Once these become blurred, a new logic is assumed: the more treatment there is, the better are the results; or, escalation leads to success. The pupil is thereby "schooled" to confuse teaching with learning, grade advancement with education, a diploma with competence, and fluency with the ability to say something new. His imagination is "schooled" to accept service in place of value. Medical treatment is mistaken for health care, social work for the improvement of community life, police protection for safety, military poise for national security, the rat race for productive work. Health, learning, dignity, independence, and creative endeavor are defined as little more than the performance of the institutions which claim to serve these ends, and their improvement is made to depend on allocating more resources to the management of hospitals, schools, and other agencies in question.

And to complete the trio Alfie Kohn who also has a great deal to say about schooling, teaching methods and the negative role of rewards and punishment.

11 June 2004

Semantic Web Debates

Two recent postings on the O'Reilly sponsored XML.com site shed some light on developments and debates in the W3C Semantic Web world (linking to my recent posting referencing Jon Udell's comments on Longhorn). Quoting from XML.com Xtra!:

A far-fetched vision of a utopian computing future? Not so, says Daniel Zambonini in our lead feature this week. In fact, all the component parts of web infrastructure already exist to make this a reality. Find out how tomorrow's web is already here today.

http://www.xml.com/pub/a/2004/06/09/tomorrow.html

In this week's XML-Deviant column, Kendall Clark looks at the arguments espoused by Semantic Web skeptics in the XML world. He also introduces a new working group at the W3C, the Data Access Working Group (DAWG). DAWG has been deputized to do standardization work on a query language and data access protocol for RDF. http://www.xml.com/pub/a/2004/06/09/deviant.html

10 June 2004

ZMailer test MX records and smtp

ZMailer test MX records and smtp and actually checks if an email address exists: Zmailer.org.

9 June 2004

Questions about Longhorn

Three excellent postings on Jon Udell's weblog (as usual) on the issues of Semantic Web (abbreviated to SemWeb), RDF and Microsoft's new file persistence layer in Longhorn.

Questions about Longhorn, part 1: WinFS

Questions about Longhorn, part 2: WinFS and semantics

Questions about Longhorn, part 3: Avalon's enterprise mission

(from part2 ....)

"It seems that the point being argued is that with RDF you can get more understanding of the information in the document than with just XML. Being that one could consider RDF as just a logical model layered on top of an XML document (e.g. RDF/XML) I find it hard to understand how viewing some XML document through RDF colored glasses buys one so much more understanding of the data. [Dare Obasanjo] Dare aims this critique at RDF/SemWeb, not WinFS, but I'll take the liberty of extending it to both. And I'll argue that in theory, an information system based on explicit knowledge representation -- using triples, or relationships, or whatever flavor of item-linking you prefer -- is way more powerful than a system in which the same knowledge is available only implicitly. But in practice, I wonder if anybody, whether it's Tim Berners-Lee or the Longhorn architects, can mandate such an approach given the chaotic messiness of reality. My favorite Joshua Allen quote, for example, is this one -- which I also used in my XML 2003 keynote: The lesson, of course, is that real-world information is chaotic. In any but the smallest "proof of concept" systems, the best that one can hope for is to be able to recognize small pockets of structure within a sea of otherwise unstructured information. [Joshua Allen]Maybe it depends how you construe "small pockets of structure." I've been getting decent mileage using nothing fancier than unschematized XML fragments. Microsoft, meanwhile, has taken a great leap forward in Office 2003 with support for schematized XML documents. The first glimmer of this stuff came almost two years ago. It shipped last fall. If asked to paraphrase the Office XML strategy then, I'd have put it this way:

Let's get schematized information out into the open, where any XML-aware tool can see it and touch it and work with it -- locally and globally, on Windows or any platform -- and then let's see what happens. If we play our cards right we'll broadly legitimize schematization, and we'll be able to use Windows to layer semantic value on top of it.

If asked to paraphrase the WinFS strategy now, I'd put it this way:

Let's put schematized information into Windows, where any CLR-aware Windows application can see it and touch it and work with it.The first strategy envisions a plurality of schemas arising from the grassroots. You won't often hear support for this strategy from Microsoft, but I heard it last fall at the Enterprise Architect Summit from Jean Paoli, who appeared (with Sun's Jon Bosak) on my panel Schemas in the wild.

The second strategy envisions a canonical set of schemas woven tightly into Longhorn. Years from now it'll ship. Years later, it'll reach critical mass, developers will have mastered its APIs, and schema-aware Windows apps could start to make a "semantic" way of organizing and finding information real for lots of people.

Why wait? Microsoft is telling us to disregard the grassroots Office XML strategy, which is here now and doesn't lock us in, in favor of the ivory-platform WinFS strategy, which is years away and does lock us in. If a compelling argument can be made for the second approach, I haven't seen it yet."

8 June 2004

AI for Service Composition

There are numerous AI Planner tools available (most based on Lisp/Prolog with backwards chaining of some description).

Firstly SHOP2 (Simple Hierarchical Ordered Planner). This page explictly lists a paper on the issue of using DAML-S (now OWL-S) service descriptions for service composition.

See also POP (Partial Order Planner).

Alternative platforms for this type of thing include JESS (Java Expert System Shell).

7 June 2004

Building Web Applications with Maypole (Perl-based Framework)

I recently came across this article Build Web appplications with Maypole (A Perl framework for quick and easy database-backed applications) by Simon Cozens on the IBM DeveloperWorks site.

It explains a perl framework based on the popluar MVC pattern (model-view-controller) that creates a logical three tier architecture separating presentation, business logic and data persistence layers.

I am very interested in design patterns and it is good see they are having an impact on light-weight scripting environments such as Perl as well as on more heavy-weight development environments.

21 May 2004

Cheap Airlines in Europe

I do a lot of travel, and try and get good value using cheap European airlines.

Sometimes they offer cheap one-way fares to lesser used airports near major European cities. This is just a reminder to myself of the best options.

Irish Airlines

UK & Continental European Airlines

- easyJet with UK hubs.

- BMIBaby with UK hubs.

- Air Europa with Madrid hub.

- Sterling with Scandinavian hubs.

- Germanwings with German hubs.

- Virgin Express UK company with Brussels and Amsterdam hubs.

10 May 2004

Sample ASP Code for Application

This is an on-line example of how to do an ASP application using VBScript: ASP American Football Pool. I'm linking it here as a reminder as it is a simple easily understood example that would be good for teaching 2nd/3rd year degree students how to do sensible design for an ASP-based project.

4 May 2004

LCC@Home

Kendall Grant Clark has published an interesting series of articles on the O'Reilly XML portal around the issue of creating a personal electronic catalogue of your home library. He's nicknamed the project LCC@Home as LCC is the cataloguing system of the Library of Congress in the USA.

My first significant computer project as a child was to try and catalogue my books at home (on a Sinclair ZX81 and later a Sinclair Spectrum). The database (in-memory BASIC data structures) and sorting worked (bubble sort), but the limited memory made the system pretty useless (I hadn't heard of merge-sort and how to manage large off-line storage with small memory at the time).

It is interesting to see that we're eventually reaching the time when it is possible to use on-line directories of data (such as the Library of Congress and the various music and movie/film databases) to produce high quality catalogues of home material.

3 May 2004

50 Million Websites

The monthly web server survey from Netcraft for May 2004 ahs found over 50 Million web sites Netcraft: May 2004 Web Server Survey Finds 50 Million Sites.

"The first Netcraft survey in August 1995 found 18,957 hosts. Previous milestones in the survey were reached in April 1997 (1 million sites), February 2000 (10 million), September 2000 (20 million) and July 2001 (30 million)."

22 April 2004

GNU screen

This entry GNU Screen: an introduction and beginner's tutorial || kuro5hin.org alterted me to a very useful utility, especially when connecting over flaky connections that may get dropped - just log back in and reconnect, cool.

Copenhagen Consensus

The blog alerted me to the A new way to save the World: The Copenhagen Consensus || kuro5hin.org. I had read the Skeptical Environmentalist and was intrigued, though not won over. For a more realistic consensus see UN Millenium Development Goals.

WordPress

It looks like WordPress

could be a contender to replace MoveableType, with some additional features a similer setup and maintenance. The main advantage for me is multiple categories for postings. I don't mind too much that it is PHP-based rather than Perl-based. Anyway, I may have time to check it out.

17 April 2004

Perl 6: Apocalypse 12

Perl 6, next next version of Perl 5, is being developed over a number of years through an open co-operative process informed by Perl's original creator, Larry Wall. As part of this process Larry releases regular statements of intent for the Perl 6 langauge, and on 16th April 2004 he released perl.com: Apocalypse 12 [Apr. 16, 2004] that address issues relatived to object-orientation in Perl 6. See the following for more information on Perl 6 Development and Parrot (a virtual machine for interpreted languages including Perl 6).

24 March 2004

Typekey and MovableType

TypeKey is a new authentication service for the soon to be released MovableType 3.0. It will be open and free and allow other web applications to use this service as a central authentication service. It has already started some heated discussions in the bloging community. The reasons behind this system are based upon a desire to make it simple for weblog authors to allow open comments to be posted on the weblogs without this facility being abused by spammers. Whether it will be accepted, and whether it will actually work have still to be proven. Thanks to Bernie Goldbach (irish.typepad.com) for raising this issue with me.

5 March 2004

Meaning (and what it means)

Doing some background reading today I came across the excellent on-line work of J. F. Sowa (who edited the draft proposed ANSI standard for conceptual graphs):

Categorization in Cognitive Computer Science and Ontology, Metadata, and Semiotics.

He almost makes me want to believe in Artificial Intelligence again.

I was certainly very stimulated by his references to Charles Sanders Peirce (1839-1914). I had come across the concept of deduction, induction, and abduction, but did not know its provenance.

2 March 2004

Joint AlbatrOSS & Opium Workshop

People often ask me what I do in the TSSG (Telecommunications Software & Systems Group), and sometimes I am a bit stuck for an answer. This is beause the constant involvement in trying to win funding for new projects in the general area of distributed computing and in communications software can be a bit abstract. Like many managers I spend a lot of time in meetings, this doesn't sound very exciting. However, I really beleive in what the TSSG are trying to achieve and it is usually quite hard to articulate this vision in a concrete way.

Recently however, two (of the 11 or so) projects our group is involved in at the moment held an open workshop and published on-line as much material as they could about this. The resulting page of links captures the type of activity we have done (in the two projects Opium and AlbatrOSS) and also where we're heading (in the overviews of the new projects: SEINIT, and Daidalos). Of course there are many links to other projects we're involved in, and these projects build on many previous strands of activity.

AlbatrOSS & Opium Workshop (Berlin 25th Feb 2004)

In summary these projects have looked at a wide range of issues facing services and service management in wireless networks, with particular focus on the telecommunications services in the 2.5G and 3G networking environments. Opium has promoted 2.5G and 3G interoperability testing between different operators and service providers. AlbatrOSS has tried to define an managament architecture for 3G services.

For further information see:

AlbatrOSS Overview

Opium Overview

TSSG Home Page

20 February 2004

Practical lightweight XML-based scripting

Jon Udell's article (Feb 2004) Lightweight XML Search Servers in the latest issue of XML.com highlights a number of useful technologies. I am particulary keen to see a real use of the XML ability of Berkely DB as a back end for a lightweight scripting utility. What is great about the article is how he describes the practical issues that arise and how he used existing open source tools to address most of these, rather than programming a monolithic application from scratch. This type of case study really illustrates the way programmers need to behave in today's world. My challenge as an educator is to help produce students who can do this type of thing.

12 February 2004

Using XHTML for partial semantic marking

Jon Udell is continuing to open up the debate on how to create a middle ground between the ideal of semantic web with rich metatdata, and the real world, now, of very un-marked up web content. Recent postings on his weblog like Jon Udell: Confession time and Jon Udell: Content-aware search show how through creative use of the XHTML/CSS attribute tags (potentially auto-generated) text-based resources like weblog entries can become a proximate knowledge-base.

21 January 2004

Mozilla SeaMonkey